Friday Funny: National Do a Grouch a Favor Day

jwilson2024-02-16T16:35:45+00:00National Do A Grouch A Favor Day is a call to kindness on February

Exchange Ports means An Internet exchange point (IXP) is a physical location through which Internet infrastructure companies such as Internet Service Providers (ISPs) and CDNs connect with each other.

Corning’s fiber network solutions anticipate 5G-ready connectivity inside buildings. Replace legacy category & coaxial cabling architectures, making them a thing of the past. Future Ready. Expert Support.

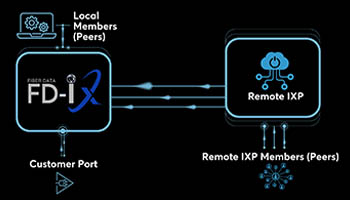

Private peering is performed across a shared network called an Internet Exchange Point (IX or IXP). Through an Internet Exchange, you can connect to many other peers using one or more physical connections,

Remote peering means peering without a physical presence at the local exchange point. This can be done because FX-IX partners have already connected their network to FD-IX peering platforms in various locations…

Are you a data center operator looking for an additional revenue stream or an edge for your competition? Let Fiber Data Exchange / Midwest Internet Exchange create an exchange fabric in your data center.

National Do A Grouch A Favor Day is a call to kindness on February

In today's interconnected world, where the internet is a gateway to information, communication, and

Introduction: In the vast realm of the internet, where data flows ceaselessly across

In the ever-expanding landscape of the internet, the efficient and intelligent routing of data

Title: Driving Efficiency: How Internet Exchanges Lower Costs for Members Introduction: In the intricate

In the bustling world of data centers, where information flows ceaselessly and digital

In the bustling heart of every data center lies an often-overlooked yet critical component

What Are They? Fiber optic cables are slender, flexible strands made of glass or

What are Ethernet Cables? Ethernet cables are physical cables used to connect devices within

Improved Performance and Reduced Latency: Peering allows ISPs to exchange traffic directly, which results

Do Have Questions About Internet Exchange Point (IXP) and How It Can Help Your Business? Hit the Contact Us or Request A Quote Button Below.

The primary role of an Internet Exchange Point (IXP) is to keep local Internet traffic within local infrastructure and to reduce costs associated with traffic exchange between Internet Service Providers (ISPs)… Learn More